This blog is built with Hugo — an open-source static site generator. Static websites require no server side processing, which makes them easier to host and opens up new hosting possibilities.

There are many options out there, but I deploy my website to AWS S3, using CloudFront to distribute it globally (aka. make it fast).

Here is the why and the how.

Table of contents

Drone.io

I keep the website source code on my private Gitea server, using Drone.io to build and deploy it.

This is the configuration for my production pipeline:

---

kind: pipeline

type: ssh

name: production

trigger:

event:

- promote

target:

include:

- production

- production-force

server:

host: build.lan.uctrl.net

user: hebron

password:

from_secret: password

steps:

- name: initialize

commands:

- git submodule update --init

- ln -s /home/hebron/hugo_resources/production resources

- name: build

commands:

- hugo --gc -b https://blog.cavelab.dev/

- name: cleanup

commands:

- rm public/style.css

- rm public/assets/main.js

- rm public/assets/prism.js

- rm public/assets/style.css

- name: s3-deploy

environment:

AWS_ACCESS_KEY_ID:

from_secret: AWS_ACCESS_KEY_ID

AWS_SECRET_ACCESS_KEY:

from_secret: AWS_SECRET_ACCESS_KEY

commands:

- hugo deploy --maxDeletes -1

when:

target:

- production

- name: s3-deploy-force

environment:

AWS_ACCESS_KEY_ID:

from_secret: AWS_ACCESS_KEY_ID

AWS_SECRET_ACCESS_KEY:

from_secret: AWS_SECRET_ACCESS_KEY

commands:

- hugo deploy --maxDeletes -1 --force

when:

target:

- production-force

So what happens here?

The SSH pipeline is triggered when a build is promoted to production or production-force. It uses a local LXC container to build.

Now for the build steps:

- Initialize

- Git submodules are initiated (the theme is pulled)

- A symbolic link is created for the

resourcesfolder, this keeps assets and images from being rebuilt each time

- Build

- Hugo build, with garbage collection (cleaning up unused resources), and base URL

https://blog.cavelab.dev/

- Hugo build, with garbage collection (cleaning up unused resources), and base URL

- Cleanup

- Deleting some unused files left by the theme

- S3-deploy

- If promoted to

production- Hugo deploy, sync

publicfolder to the S3 bucket. Only touch changed or deleted files.

- Hugo deploy, sync

- If promoted to

production-force- Hugo deploy, force upload entire

publicfolder to the S3 bucket. Useful if the deployment configuration is changed.

- Hugo deploy, force upload entire

--maxDeletes -1means it won’t fail even if lots of files are scheduled for deletion (default 256)

- If promoted to

Hugo deployment

How the hugo deploy command behaves is controlled with the configuration below:

[deployment]

[[deployment.targets]]

name = "aws-s3"

URL = "s3://my-blog-bucket?region=eu-central-1"

cloudFrontDistributionID = "xxxxxxxxxxxxxx"

[[deployment.matchers]]

pattern = "^sitemap\\.xml$"

cacheControl = "public, s-maxage=604800, max-age=86400" #7d,1d

contentType = "application/xml"

[[deployment.matchers]]

pattern = "^.+\\.(css|js)$"

cacheControl = "public, immutable, max-age=31536000" #1y

[[deployment.matchers]]

pattern = "(?i)^.+_hu[0-9a-f]{32}_.+\\.(jpg|jpeg|gif|png|webp)$"

cacheControl = "public, immutable, max-age=31536000" #1y

[[deployment.matchers]]

pattern = "(?i)^.+\\.(jpg|jpeg|gif|png|webp|mp4|woff|woff2)$"

cacheControl = "public, s-maxage=7776000, max-age=604800" #90d,7d

[[deployment.matchers]]

pattern = "^.+\\.(html|xml|json|txt)$"

cacheControl = "public, s-maxage=604800, max-age=3600" #7d,1h

Let’s go though it:

deployment.targets instructs Hugo where to deploy. In my case; to AWS S3 my-blog-bucket in the eu-central-1 region. The CloudFront distribution with the specified ID is invalidated when the deployment is done.

deployment.matchers sets the behaviour for different file patters.

Patterns:

- Patterns starting with

(?i)are case insensitive. - Searching is stopped on first match.

- More info in the documentation.

Cache-control:

immutable: resource will not change over time.max-age: maximum time, in seconds, a resource is considered fresh.s-maxage: overridesmax-age, but only for shared caches (e.g., CDNs and proxies).

By using both max-age and s-maxage I can instruct CloudFront to keep the files longer than the client browser. This makes sense because I can purge CloudFront, but not the clients.

^sitemap\\.xml$- Set the cache-control header for

sitemap.xml, and make the content typeapplication/xml(default forxmlisapplication/rss+xml) ^.+\\.(css|js)$- Set the cache-control header for all

cssandjsfiles. These are fingerprinted and can be cached for a long time. (?i)^.+_hu[0-9a-f]{32}_.+\\.(jpg|jpeg|gif|png|webp)$- Matches Hugo processed images, the filenames contains a MD5 checksum so we can cache them for a long time as well.

(?i)^.+\\.(jpg|jpeg|gif|png|webp|mp4|woff|woff2)$- Matches any image, video, or font file. These files may change while keeping the same filename, so we cache them for three months on CloudFront, but only seven days on the client.

^.+\\.(html|xml|json|txt)$- Matches text content. Cache for a week on CloudFront, but keep the client cache short.

Why S3?

So why do I choose S3+CloudFront when there are so many options out there?

Well, I like the simplicity. Now — I realize that may sound counter-intuitive as AWS can be pretty complex… But once you’ve figured out how it fits together, it’s rather simple.

I build the site locally, upload the files to a bucket, which CloudFront delivers. I have full control, and if I need something special; there is always Lambda@Edge.

By specifying different behaviours in CloudFront; I can pull in files from other origins. Like how /video/*/thumbnail_*.jpg comes from a different S3 bucket.

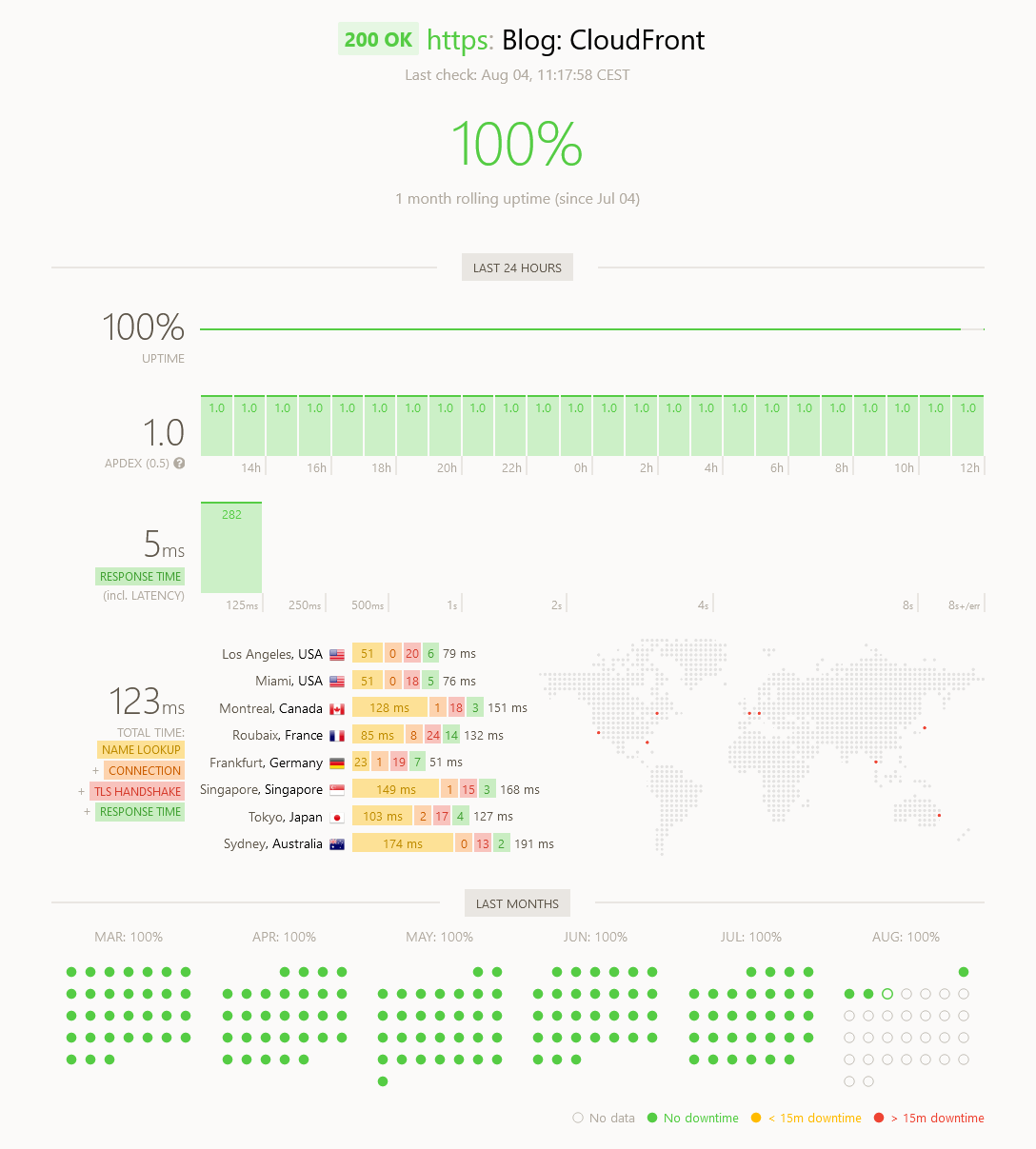

CloudFront is also very performant, as shown by savjee.be:

The best all-around performer is AWS CloudFront, followed closely by GitHub Pages. Not only do they have the fastest response times (median), they’re also the most consistent.

One thing that I haven’t implemented yet; is turning Hugo aliases into proper redirect. Like I wrote about for Nginx and Firebase. This is a bit challenging on S3+CloudFront, but quite easy on other platforms.

Most services like Netlify and Vercel wants to connect to your git repository, and build the site for you. I don’t want that, I want to build the site locally. Then I have access to my local logistics system API and it doesn’t matter if Hugo uses a long time processing images.

Experiences

I did try a few other services, before settling on AWS.

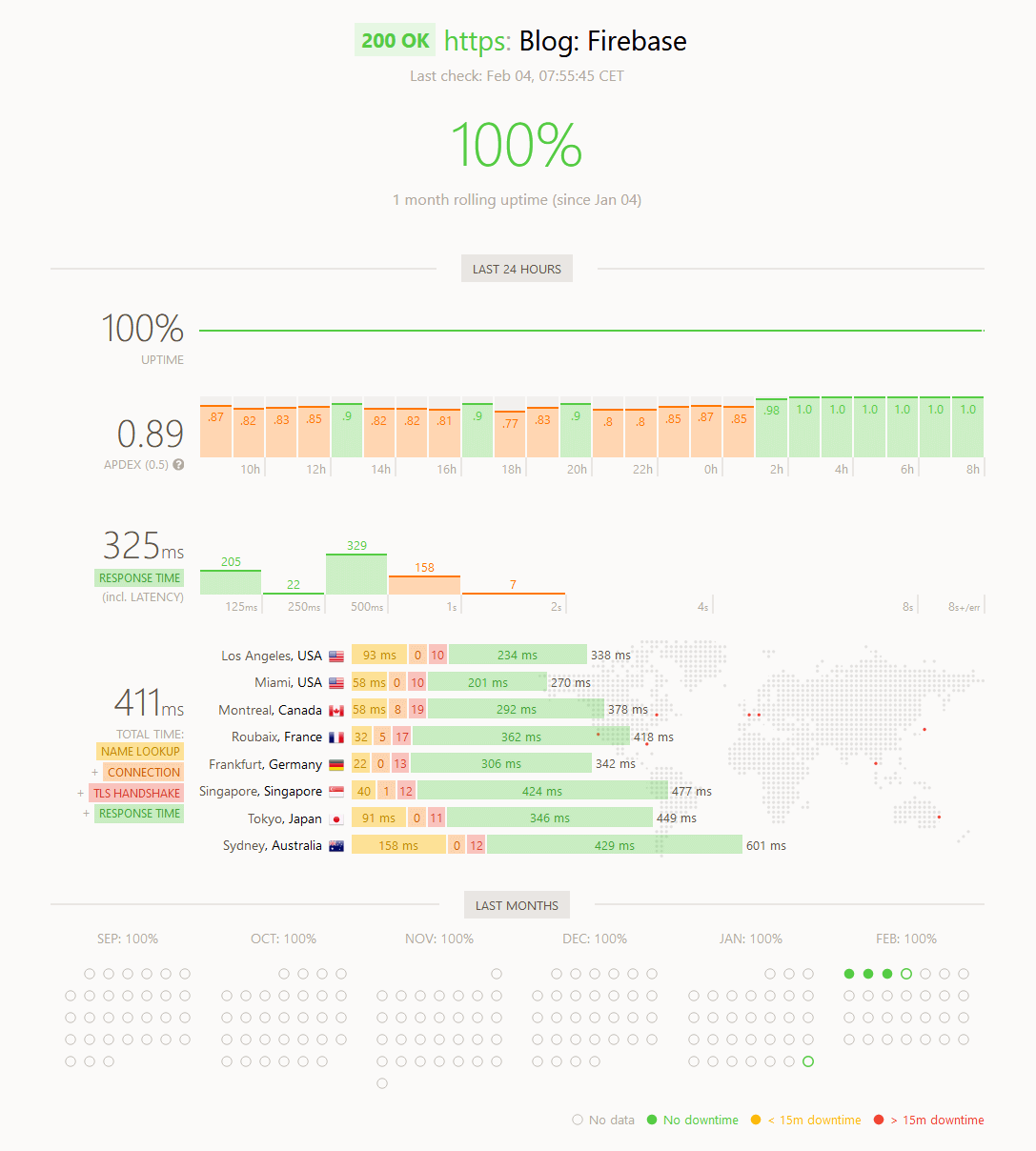

Firebase

This was my first choice. I really wanted to like Firebase — build locally and push the files. But it was slow, sometimes with weird delays for many seconds. And bandwidth, once you go above the free 360 MB/day, is quite expensive at $0.15/GB.

Towards the end of my testing, it just suddenly became faster. Not sure what happened, but it’s quite visible on the screenshot below.

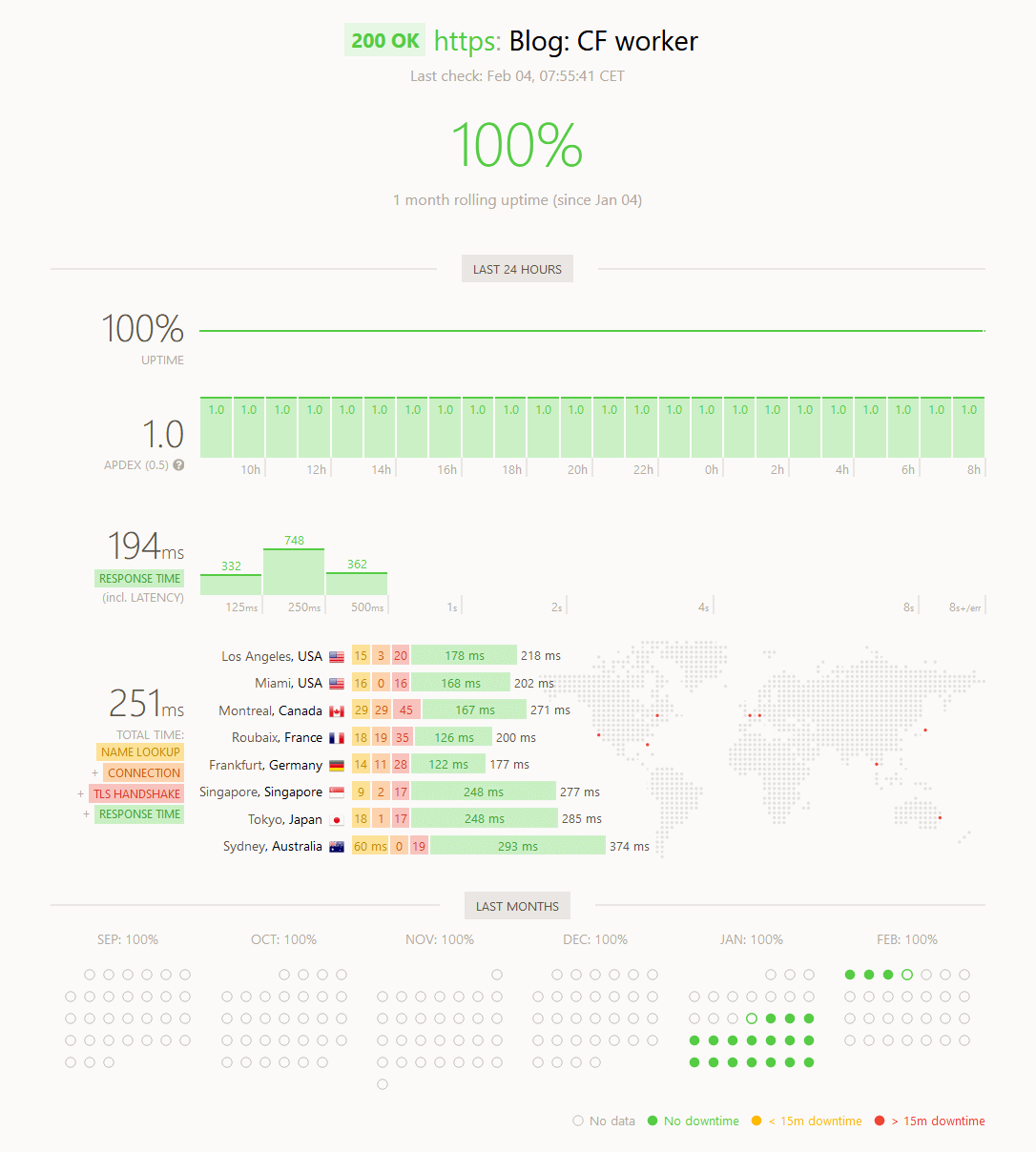

CloudFlare worker

Next I tried CloudFlare worker. This costs $5/month and was mostly good — but a bit slower than I had expected. I also found the whole worker thing rather confusing, but that’s just me.

To my surprise; I experienced one incident of TLS handshake timeouts on the Oslo server.

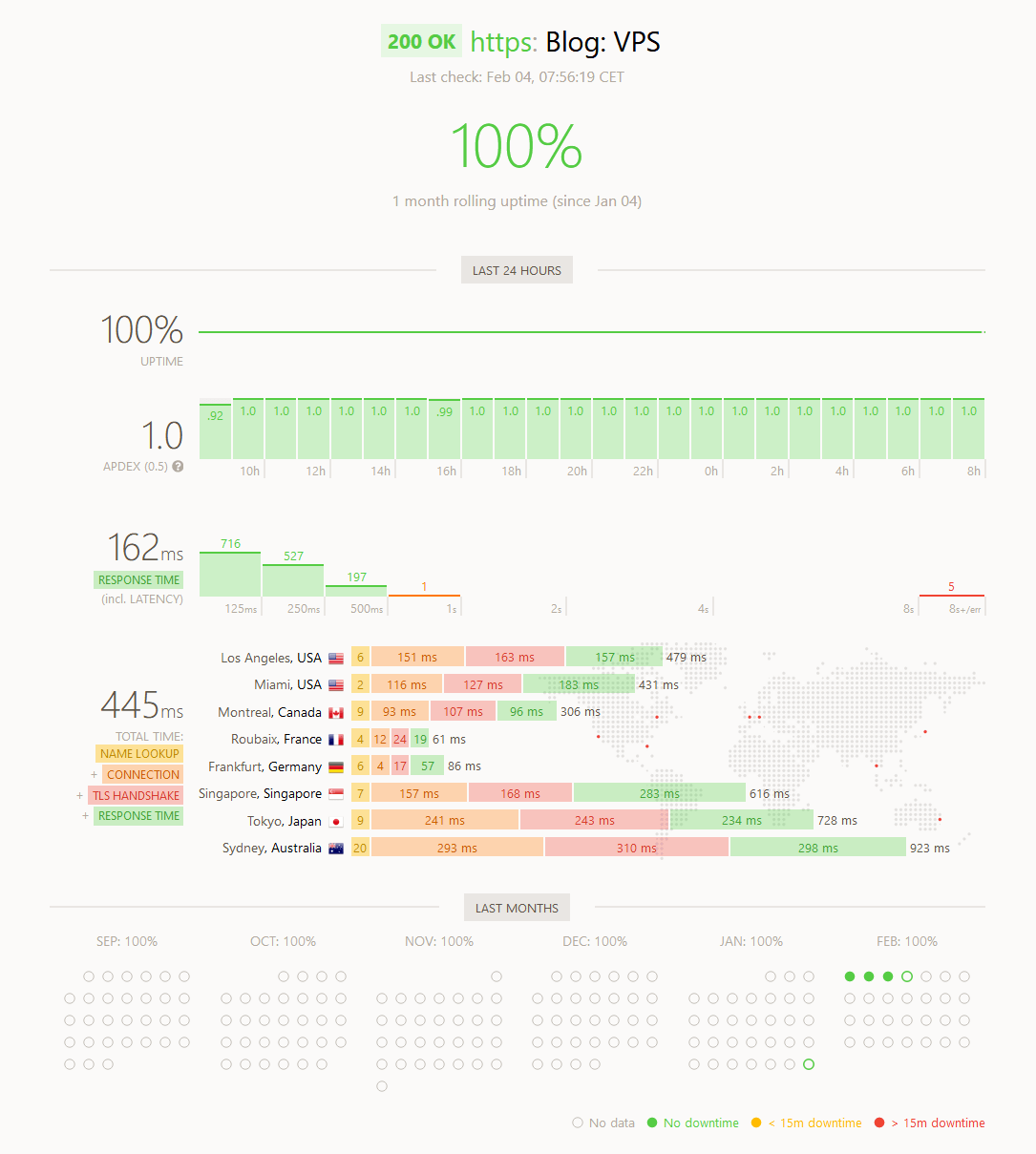

VPS

I tried using a VPS for a short while, and it behaved just as expected. As the virtual server was located in Germany, it had low response times in Europe, but high everywhere else.

Plus; I didn’t want to keep a VPS just for a static website…

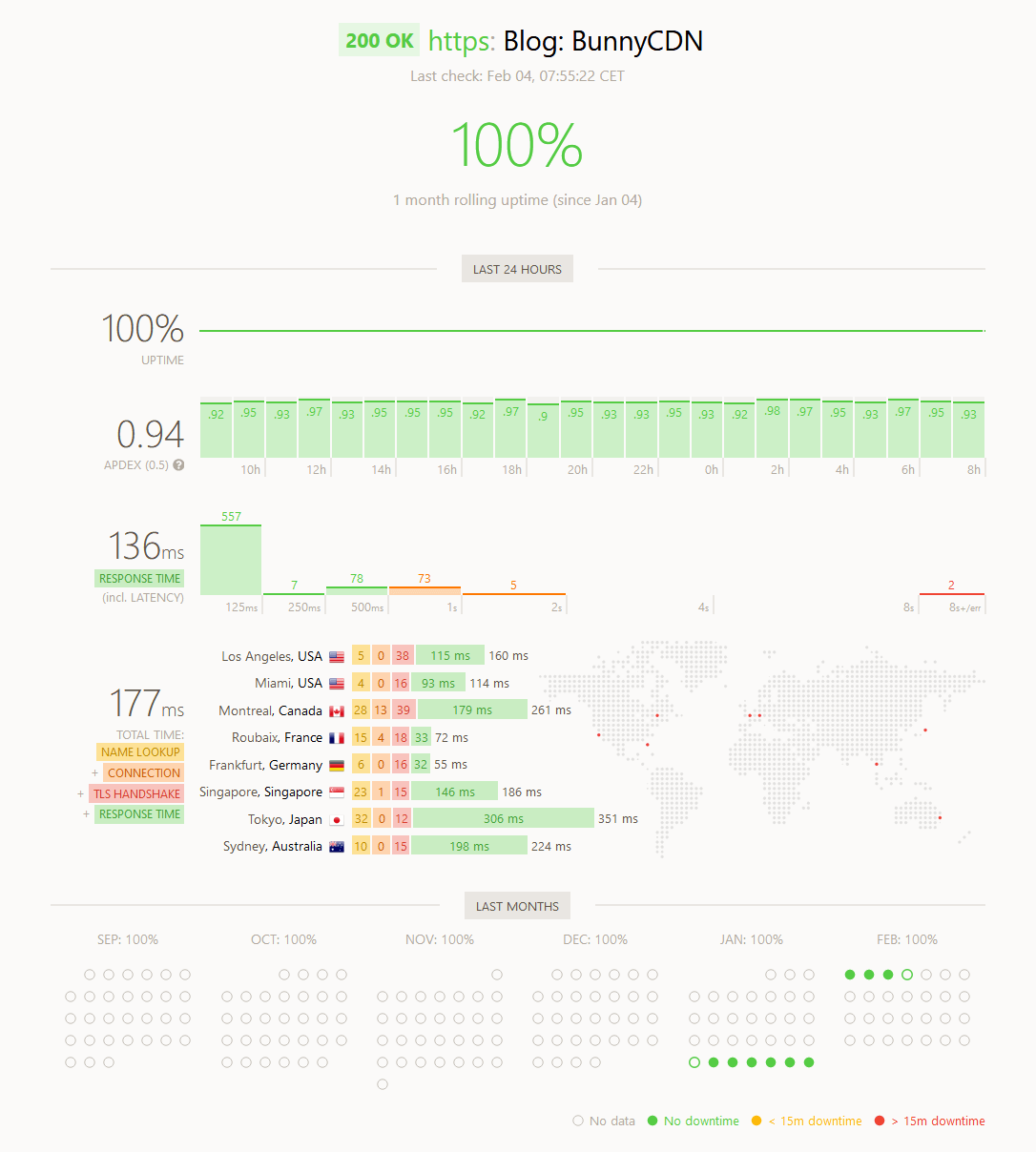

BunnyCDN and VPS

Then I tried putting BunnyCDN, or Bunny.net as they are called now, in front of the VPS. That reduced the response times considerably. I did notice some slow-downs which I couldn’t explain, with the site taking over one second to load.

The pricing are orders of magnitude lower than both Firebase and CloudFront. Starting at $0.01/GB for Europe and North America.

I didn’t try their edge-storage, as they don’t have a nice API for their storage zones. I’d really like to see something like rsync or S3 support. I’m not too fond of FTP…

S3 and CloudFront

Lastly it’s S3 and CloudFront, where I am hosting the site today. And as you can see below; the performance is consistently good. With a server response time below 10 ms. The slowest part is the Route53 name lookup ¯\_(ツ)_/¯

The pricing is in between Bunny.net and Firebase, starting at $0.085/GB in Europe and the Americas.

Wrapping it up

I’ve considered trying other hosting services, but keep falling back to S3+CloudFront. It’s simple; after the initial setup, works great, and have everything I need.

I have some ideas how to convert Hugo aliases into proper redirects, but that’s a story for another time.

Some services, like Netlify, are free — as long as you stay within the plan limits. But it can become expensive if you exceed them. With AWS I just pay for what I use, no freemium. I like that better 👍

Last commit 2024-11-11, with message: Add lots of tags to posts.