My file server, Zeta, has an mdadm RAID6 array with eight 4 TB disks. I want to expand, but before doing so I’m going to migrate to ZFS.

My current RAID6 array has 24 TB of usable space. I’m using about 80% of that.

Table of contents

The plan

- Set up a new RAIDz1 pool with 4×8 TB disks.

- This gives me 24 TB usable space, same as my current RAID6 array.

- Copy all data from the RAID6 array to the temporary ZFS pool.

- Destroy the mdadm array.

- Create a new RAIDz2 pool with 8×4 TB disks, name it

tank0. - Copy all data from the temporary RAIDz1 pool to the new RAIDz2 pool.

- Destroy the temporary RAIDz1 pool.

- Add a new VDEV with 4×8 TB and 4×2 TB disks to the

tank0pool.- That adds another 12 TB of usable space, 36 in total.

- The 4×2 TB disks is just because that’s what I have.

- Replace 2 TB disks with 8 TB, eventually.

New disks

I only had one 8 TB WD Red disk, for the plan to work I needed four 8 TB disks. I ordered three Toshiba N300s, they were much cheaper and had good reviews.

To fill up the 2nd VDEV I just used four 2 TB disks that I had laying around, I will replace them when I need to expand in the future.

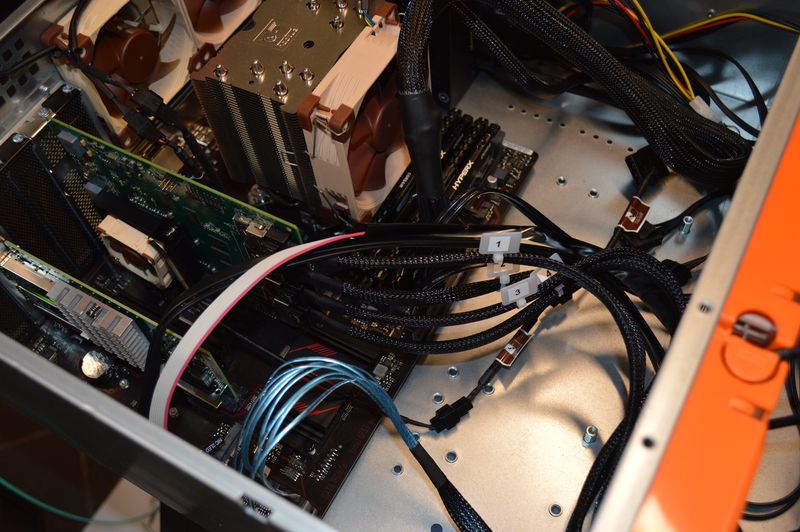

My storage server case: Inter-Tech 4U-4416

New LSI controller

My file server had an 8-channel LSI card, and because of the 10 GbE NIC I didn’t have enough available PCI-X slots available. So instead I got a 16-channel LSI card of eBay 😄

I noticed the heat sink on the new LSI controller card was hot, really hot, like burning my finger hot 😮 I ordered a Noctua NF-A4x10 FLX 40mm fan and attached that to the heat sink.

I just placed the fan on top, and used some long machine screws to mount it directly to the heat sink.

I don’t have any temperature measurements, but I can not touch it and it feels only slightly warm.

New power supply

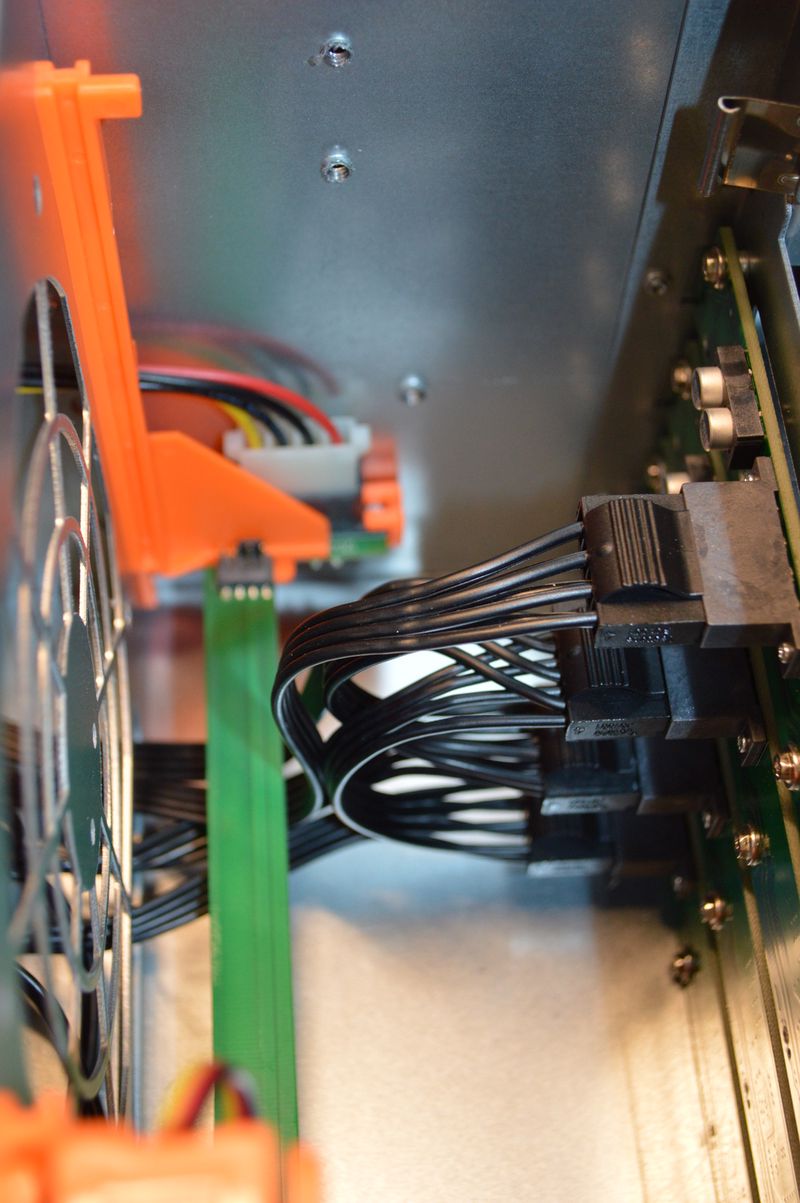

I also purchased a new 750W modular power supply — with 16 disks the start-up current can be significant. Seagate Ironwolf 4 TB has a specified startup current of 1.8 A, that is 7.2 A or 86 W per backplane (four drives), totalling 29 A or 346 W for 16 drives.

A molex connector is rated at 5 A, and so is the 0.75 mm2 cable typically used. I didn’t want to daisy chain too many backplanes, as that could overload the connector and wire. A modular power supply made it a bit easier to spread the load on multiple molex cables.

An intelligent disk controller, like the LSI, typically doesn’t boot all disks at the same time, precisely to avoid the potential start-up current spike.

Wrapping up

The migration was a success, I went from mdadm to ZFS, doubled the amount of drives (8 to 16) and added 12 TB of usable space.

My 2nd VDEV is currently severely underutilized because of the 2 TB drives, but I will be replacing those with 8 TB drives in the future. With ZFS, you can replace the drive with a bigger one, but you can not change the number of drives in a VDEV.

Disk temperatures

hebron@zeta:~$ sudo hddtemp /dev/sd[a-p]

/dev/sda: ST4000VN008-2DR166: 30°C

/dev/sdb: WDC WD40EFRX-68N32N0: 30°C

/dev/sdc: WDC WD40EFRX-68N32N0: 33°C

/dev/sdd: WDC WD40EFRX-68N32N0: 33°C

/dev/sde: WDC WD40EFRX-68N32N0: 37°C

/dev/sdf: WDC WD40EFRX-68N32N0: 36°C

/dev/sdg: WDC WD2003FZEX-00SRLA0: 38°C

/dev/sdh: WDC WD2003FZEX-00SRLA0: 37°C

/dev/sdi: ST4000VN008-2DR166: 29°C

/dev/sdj: ST2000DM008-2FR102: 33°C

/dev/sdk: ST4000VN008-2DR166: 32°C

/dev/sdl: ST2000DM008-2FR102: 35°C

/dev/sdm: TOSHIBA HDWN180: 40°C

/dev/sdn: TOSHIBA HDWN180: 34°C

/dev/sdo: TOSHIBA HDWN180: 38°C

/dev/sdp: WDC WD80EFZX-68UW8N0: 35°C

Blinkenlights

Last commit 2024-04-05, with message: Tag cleanup.